Modern Intel (and AMD) processors support in hardware the single, double and 80-bit extended formats based on the IEEE 754 standard. Traditionally, we refer to this function of the microprocessor hardware as the Floating Point Unit (FPU). Back in the early days of the PC, floating point arithmetic was mostly done in software. Hardware support came in the form of a physically separate coprocessor. Many PC motherboards from those days included a socket for a numeric coprocessor, such as the Intel 8087.

Clearly, using floating point data types that are supported in hardware is by far the preferred option, including because of reasons of performance. There are still other formats around, such as for instance the 6-byte wide real48 format in Delphi. These formats have to be implemented entirely in software, so they are slow and tedious. Programmers should avoid such non-native types as much as possible. If, for compatibility purposes, it is necessary to use formats not supported in the hardware, then one should limit their use as much as possible by converting to one of the hardware supported formats immediately and converting it back just at the end. I will focus in this article on the native single, double and extended formats.

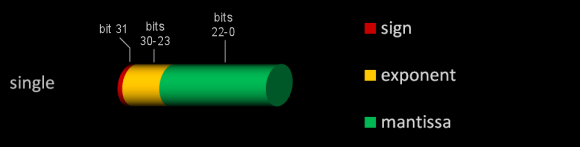

Let's examine the single format a bit more closely. As the illustration above shows, it encodes numbers as follows:

bits 0-22 = Mantissa; bits 23-30 = Exponent; bit 31 = Sign

The mantissa portion is in fact not 23, but 24 bits wide. So where is the 24th bit? The answer is simple, but at the same time ingenious: the actual value is always stored normalised. This means that the number before the decimal point (which can be 0 or 1 in the binary system, of course) is always 1. By decreasing the exponent part, the actual value can be sufficiently scaled in order to make sure the number before the decimal separator always equals 1. Because the first number of the mantissa is known to be one, there is no need to store it.

The exponent is biased: to get the real value, you must subtract 127 from the stored value. Thus, when the stored exponent pattern is less than 127, the actual exponent is negative and therefore the number represented will be less than 1. The value 255, or all bits set, as exponent is reserved and indicates the NAN (Not A Number) value. The sign bit determines the sign of the number, with a cleared (0) bit indicating a positive number and a set bit (1) a negative value.

An example: a single variable with bit pattern $42996CE8 = 0 10000101 00110010110110011101000 Mantissa = 00110010110110011101000 = 2-3+2-4+2-7+2-9+2-10+2-12+2-13+2-16+2-17+2-18+2-20 = 0.1986360549927 Add the implicit 1: = 1.1986360549927 Exponent = 10000101 = 133 Subtract bias 127 = 6 Sign = 0 = positive The stored value is thus: 1.1986360549927 * 26 = 1.1986360549927 * 64 = 76.7127075195328

The double format follows the same principles, but the mantissa and exponent parts are larger and therefore can store numbers with a greater precision. The 80-bit extended format, however, differs slightly from its single and double counterparts in that the integer part is explicitly stored in bit 63. This integer part in bit 63 absorbs any carry values, thus ensuring precision up to 19 digits. By contrast, in single and double formats, the integer part is always one.

It's important to know also that the FPU unit internally always works with the 80-bit extended format (also referred to as temporary real format). This means that every time you load a single or double value into the FPU, it is converted up to the extended format.

The following table gives an overview of the three common floating point formats discussed in this article:

| Mantissa | Exponent | Sign | |

| Single | bits 0-22 | bits 23-30 | bit 31 |

| Double | bits 0-51 | bits 52-62 | bit 63 |

| 80-bit Extended | bits 0-63(*) | bits 64-78 | bit 79 |

(*) Note that unlike for single and double, the extended format stores the most significant bit of the mantissa explicitly. See the text for further information.

Next: Accuracy and Precision